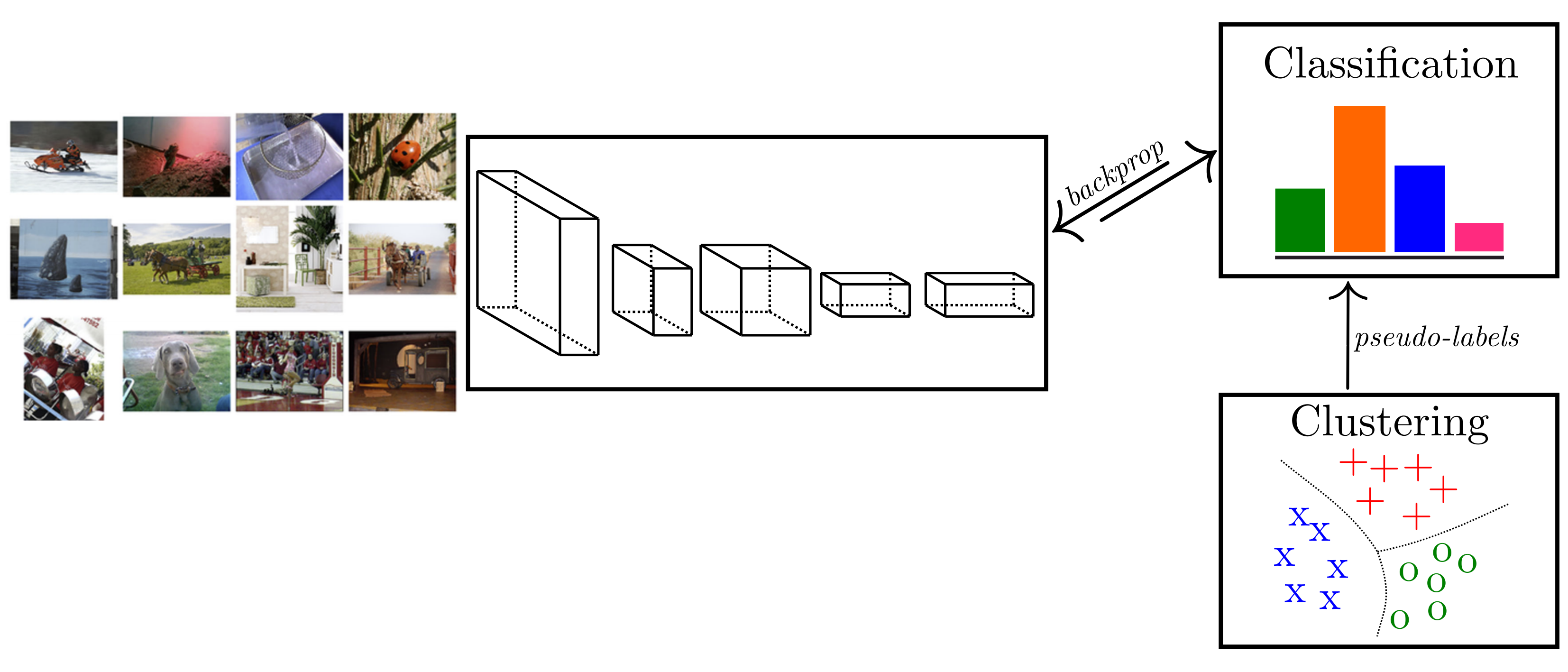

In this case, we work with four students. We have two models, A and B, that predict the likelihood of the four students passing the exam, as shown in the figure below. Let’s consider the earlier example, where we answer whether a student will pass the SAT exams. We’ll discuss the differences when using cross-entropy in each case scenario. The average level of uncertainty refers to the error.Ĭross-entropy builds upon the idea of information theory entropy and measures the difference between two probability distributions for a given random variable/set of events.Ĭross entropy can be applied in both binary and multi-class classification problems. We can see that the random variable’s entropy is related to our introduction concepts’ error functions. According to Shannon, the entropy of a random variable is the average level of “information,” “surprise,” or “uncertainty” inherent in the variable’s possible outcomes. Cross-entropyĬlaude Shannon introduced the concept of information entropy in his 1948 paper, “A Mathematical Theory of Communication. We’ll now dive deep into the cross-entropy function. Understanding cross-entropy, it was essential to discuss loss function in general and activation functions, i.e., converting discrete predictions to continuous. The above function is the softmax activation function, where i is the class name. We have n classes, and we want to find the probability of class x will be, with linear scores A1, A2… An, to calculate the probability of each class. Using probabilities for Illustration 2 will make it easier to sum the error(how far they are from passing) of each student, making it easier to move the prediction line in small steps until we get a minimum summation error.Įxponential converts the probability to a range of 0-1 In this case, the activation function applied is referred to as the sigmoid activation function.īy doing the above, the error stops from being two students who failed SAT exams to more of a summation of each error on the student. Our example is what we call a binary classification, where you have two classes, either pass or fail. How do we ensure that our model prediction output is in the range of (0, 1) or continuous? We apply an activation function to each student’s linear scores. To convert the error function from discrete to continuous error function, we need to apply an activation function to each student’s linear score value, which will be discussed later.įor example, in Illustration 2, the model prediction output determines if a student will pass or fail the model answers the question, will student A pass the SAT exams?Ī continuous question would be, How likely is student A to pass the SAT exams? The answer to this will be 30% or 70% etc., possible. However, in Illustration 1, since the mountain slope is different, we can detect small variations in our height (error) and take the necessary step, which is the case with continuous error functions.

If we move small steps in the above example, we might end up with the same error, which is the case with discrete error functions. We apply small steps to minimize the error. In most real-life machine learning applications, we rarely make such a drastic move of the prediction line as we did above. If you find anything interesting or would want to connect, drop me line using the side bar.Īs some of you had requested, Login has been removed from subscription form.To solve the error, we move the line to ensure all the positive and negative predictions are in the right area.

Hi People, Thanks a ton for your feedback and response.

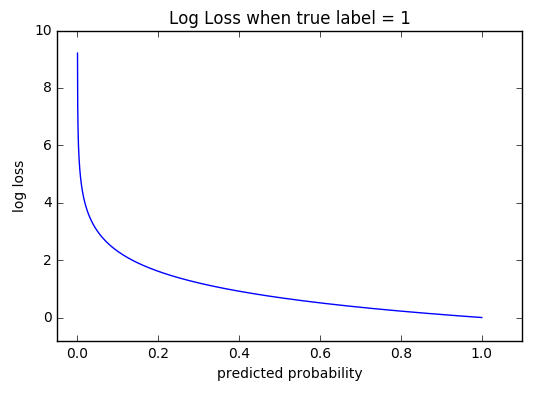

Hopefully, cross_entropy_loss’s combined gradient in Listing-5 does the same. #Cross entropy loss function codeImplemented code often lends perspective into theory as you see the various shapes of input and output. Also, their combined gradient derivation is one of the most used formulas in deep learning. # ]]Īs you can see the idea behind softmax and cross_entropy_loss and their combined use and implementation. copy () dy = dy - target_one_hot # prints out gradient: zeros (( batch, seq, size )) # Adapting the shape of Yįor batnum in range ( batch ): for i in range ( seq ): target_one_hot = input_one_hot ( y, size ) dy = softmaxed. log ( -y) \:\:\:\: eq(3)\) CrossEntropyLoss Derivative implementation softmaxed, loss = cross_entropy_loss ( x, y ) print ( "loss:", loss ) batch, seq, size = x. Cross entropy is a loss function that is defined as \(\Large E = -y. This is a loss calculating function post the yhat(predicted value) that measures the difference between Labels and predicted value(yhat).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed